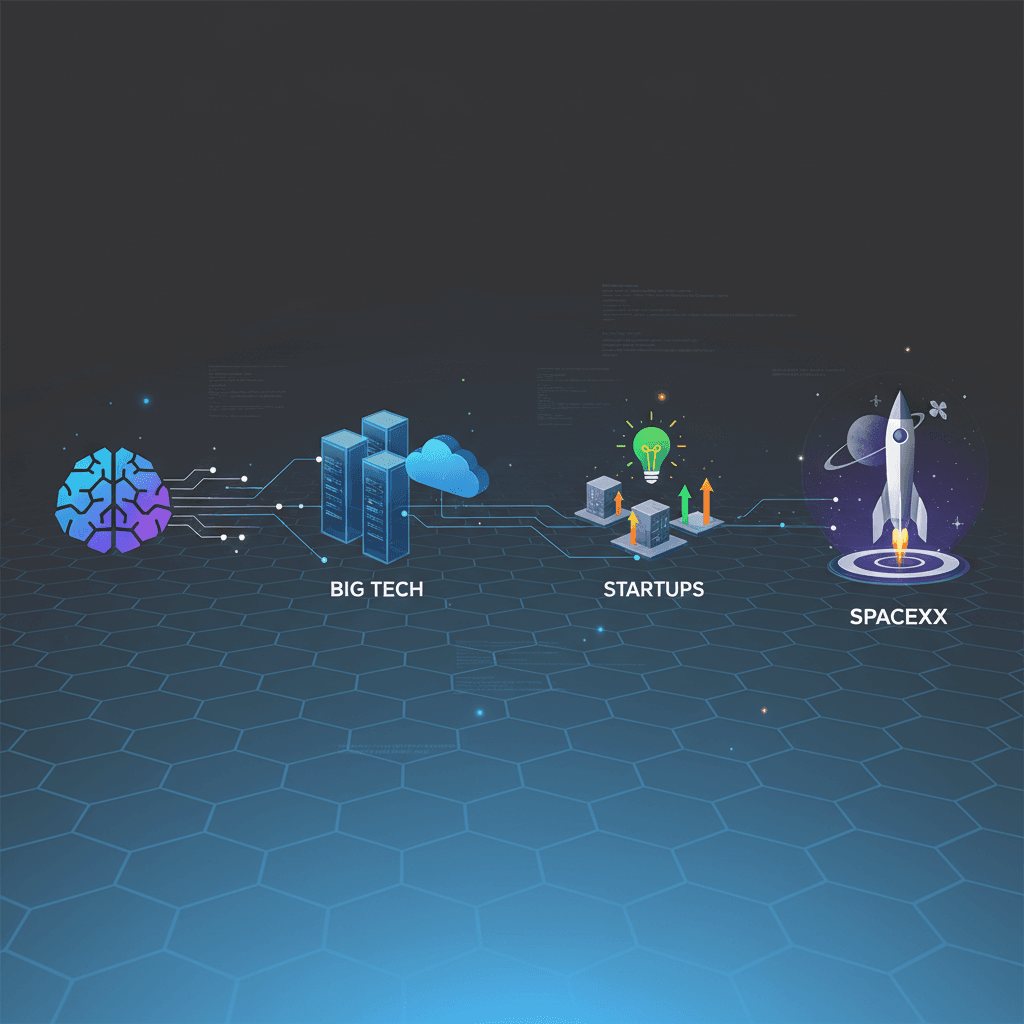

Biên giới mới của AI: Các công ty công nghệ lớn, startup và SpaceX đang định hình làn sóng trí tuệ nhân tạo tiếp theo

AI’s New Frontier: How Big Tech, Startups, and SpaceX Are Shaping the Next Wave of Intelligence

Context and background

The past three years have accelerated a tectonic shift in artificial intelligence: foundation models moved from research curiosities to commercial platforms, cloud providers raced to offer specialized instances, and a sprawling ecosystem of startups and incumbents aligned around model deployment, optimization, and safety. While big tech continues to steer much of the market through massive compute, data access, and integrated services, a vibrant startup scene is driving innovation at the edges — from efficient model architectures to new developer tools. At the same time, SpaceX and related ventures have introduced a different axis to this landscape: the intersection of connectivity, data collection, and vertically integrated AI ambitions driven by actors who span both software and hardware.

Compute, chips, and the economics of scale

The most visible constraint on large-model progress is compute. Hyperscalers and cloud providers have invested heavily in GPU farms and bespoke accelerators to meet demand. That concentration creates natural advantages for a handful of players who can amortize expensive silicon and cooling infrastructure across massive workloads. Startups face a difficult calculus: build proprietary inference stacks and risk high capital intensity, or design models and tooling tuned to run efficiently on rented cloud instances.

This dynamic is pushing two parallel trends. First, software-centric optimization — quantization, sparse models, and new compilers — is reducing the cost of inference and enabling new use cases on constrained hardware. Second, a resurgence in specialized hardware startups and research into domain-specific accelerators aims to broaden who can economically train and serve large models. The result is a layered market: big tech controls the high-end scale, while startups and third parties push for efficiency and edge-native deployments.

Big tech’s strategic posture: integration and platformization

Large incumbents are pursuing a three-pronged strategy: embed AI across existing products, provide developer-facing platforms, and secure long-term supply of compute. By integrating models into search, productivity suites, and cloud services, big tech firms convert latent demand into sticky revenue streams. Simultaneously, platform offerings — managed model hosting, fine-tuning services, and prebuilt connectors — lower friction for enterprises wanting to adopt generative AI without the complexity of raw model operations.

This platformization deepens incumbents’ control over developer experiences and data flows, but it also invites regulatory scrutiny and antitrust questions as these firms bundle AI capabilities with dominant cloud and productivity stacks.

Startups: specialization, composability, and risk-taking

Startups remain the innovation crucible. They experiment with model modalities, domain-specific agents, and tools that make models more controllable and cost-effective. Many are pursuing composable stacks: modular components that can be combined to create tailored AI products without training monolithic new models. Others focus on developer ergonomics — observability, prompt engineering platforms, and MLOps — which address the practical pain points enterprises face when moving from pilots to production.

Funding remains robust but selective: investors prize teams that can demonstrate both scientific novelty and a clear path to lower operating costs or faster time-to-product-market. That emphasis favors methods that squeeze more performance from less compute or that open new revenue channels, such as vertical applications in healthcare, finance, and industrial automation.

SpaceX: connectivity, edge data, and the vertical play

While not a conventional AI company, SpaceX influences the AI ecosystem via two channels. First, its Starlink constellation changes the calculus for distributed data collection and edge deployment by offering more ubiquitous low-latency connectivity in regions previously underserved. That connectivity widens the potential footprint for deployed AI agents and IoT fleets, enabling edge inference where it was previously impractical.

Second, the rise of operator-driven AI ventures linked to space and aerospace firms underscores an appetite for vertically integrated products that combine hardware, connectivity, and specialized AI software. Organizations that control devices, networks, and data can optimize models for their specific telemetry and operational constraints — a competitive advantage when accuracy and latency have mission-critical implications.

Implications and outlook

The coming years will be defined by tension between centralization and decentralization. Centralized compute and platform dominance enable rapid scaling and enterprise adoption, but efficiency-focused innovations and edge connectivity will democratize what’s possible outside the hyperscale cloud. Regulation will increasingly shape competitive dynamics, especially around data governance, model safety, and market consolidation.

For startups, the path forward is pragmatic: specialize where scale is not the primary moat, optimize for cost and latency, and build interoperable components that integrate into larger stacks. For big tech, the challenge is balancing product integration with openness and third-party developer trust.

Space-focused players and connectivity providers add another dimension: by controlling the pipes and endpoints, they can accelerate edge AI adoption and create domain-specific value chains that bypass some traditional cloud dependencies.

Ultimately, the next phase of AI growth will be less about who trains the largest model and more about who can deliver dependable, cost-effective intelligence across the most useful set of real-world contexts.

Outlook

Expect incremental specialization: more efficient models, more composable tools, and deeper co-design between hardware and software. Markets will bifurcate into hyperscale integrated offerings and a diverse ecosystem of niche providers optimized for cost, latency, or unique data. Policy and standardization efforts will be critical in ensuring competition and mitigating systemic risks as AI becomes further embedded in infrastructure and industrial operations.

Sẵn sàng bảo vệ quyền riêng tư?

Tải Doppler VPN và bắt đầu duyệt web an toàn ngay hôm nay.